Best AI Coding Tools for 2026

8 min read

Shipping used to hinge on how fast you could type. Now it hinges on how fast you can ask for the right change and verify it. The wrong AI coding tools create noise at scale; the right ones compress the distance from intent to working code. This review is unapologetically opinionated: if a tool does not raise your throughput and confidence on a real repo under real deadlines, it did not make the cut.

You can feel it in your editor: autocomplete got smart, chat moved into the codebase, and agents keep asking for your terminal. The temptation is to hoard features and chase demos, but velocity comes from depth, not breadth. This introduction sets the stakes: choose a small, interoperable stack that compounds with your habits and your code graph. The thesis is simple—adopt tools that shrink the loop between “what I mean,” “what I typed,” and “what runs,” and ignore everything that does not move those three.

Why AI Coding Tools?

AI does not eliminate work; it relocates the bottleneck from mechanics to models of the problem. The best assistants make high-entropy tasks (unknown APIs, legacy code spelunking, mechanical refactors) behave like low-entropy tasks you can dispatch quickly. When that happens, your cognitive budget shifts to architecture, invariants, and naming—the parts only you can do.

Here is the counterintuitive bit: raw speed is not the goal. Reliability of iteration is. Tools that encourage tiny, verifiable steps keep you shipping; tools that produce huge diffs and vibes inflate your review queue and your blood pressure. Measure impact in verified commits per day, not tokens per minute.

How Do AI Agents Work?

An AI coding agent is not a wizard; it is a control loop that translates intent into a sequence of tool calls against your repo. The good ones do three things well: they extract a plan from your prompt, they ground every step in your actual files and tests, and they show their work so you can interrupt them the moment they drift.

In practice, most agents use a pattern like this:

Frame the task: restate the goal and constraints from your prompt and the repo context.

Build a plan: decompose into steps with sources of truth (files, tests, docs, running services).

Act with tools: read/write files, run grep/search, execute tests, run the app, open a PR.

Observe and correct: parse outputs, detect failures, revise the plan, retry or request help.

Commit and summarize: produce a minimal diff, rationale, and follow-up tasks.

The tension is between autonomy and guardrails. More autonomy lets it unblock you at 2 a.m.; tighter guardrails keep it from paving your repo. The most effective setups keep the agent’s authority narrow—let it write tests, run linters, and touch leaf files—but require explicit confirmation before schema changes or invasive refactors. Treat the agent like a fast junior: give it crisp acceptance tests and tool access, then demand small, reviewable commits.

Top 10 Best AI Coding Tools for 2026

Most products pitch features; these ten earn their slot by shipping value on real teams. Each entry names where it excels, what to watch, and why it beats its neighbors when the stakes are high. If you adopt only two, start at the top and work down until you feel compound returns.

Cursor: Best all-around AI IDE for daily development. It blends deep inline edits, chat grounded in your repo, and agentic refactors with a reviewable diff workflow; watch context windows on monorepos and pin model versions for repeatability.

GitHub Copilot (Chat + inline): Best low-friction completion on mainstream stacks. It nails local edits, quick API usage, and test scaffolds; pair it with disciplined prompts to avoid bloated suggestions and prefer small, iterative acceptances.

Sourcegraph Cody: Best for code search + reasoning over large codebases. Its semantic search and embeddings reduce spelunking time dramatically; keep an eye on privacy settings and repository indexing scope.

JetBrains AI Assistant: Best for JVM and strongly-typed ecosystems inside JetBrains IDEs. It leverages the project model, inspections, and refactorings; expect stronger guidance on types and nullability, weaker on heterogeneous polyglot repos.

Windsurf (by Codeium): Best for “vibe coding” sessions that move from idea to working prototype fast. Its chat-first UI and workspace memory encourage flow; constrain it with tests when hopping from prototype to production.

Continue.dev: Best open-source in-editor assistant you can self-host or wire to local models. It’s highly hackable, great for privacy-sensitive orgs; you own reliability, so invest in model selection and prompt presets.

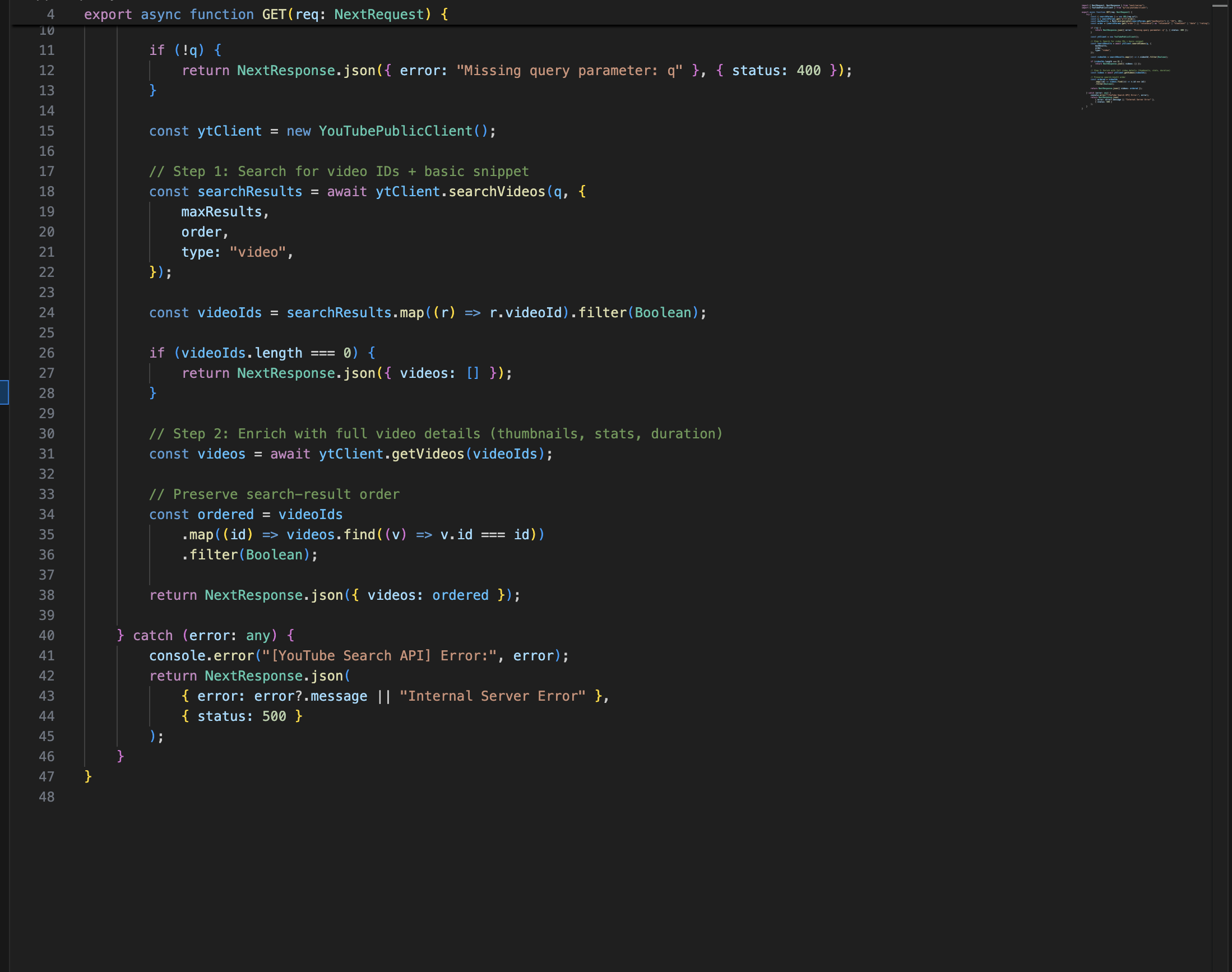

Aider: Best CLI-first agent for surgical, diff-driven changes. It reads the repo, proposes minimal patches, and keeps the conversation in your terminal; monitor file inclusion to avoid partial-context edits.

Supermaven: Best for aggressive autocomplete that feels almost telepathic. It accelerates routine coding and boilerplate; use with discipline, or you will accept plausible but subtly wrong code.

Replit Agents: Best for rapid full-stack app spikes and demos. It orchestrates scaffolding, deployment, and iteration in one place; production teams should export to their stack early to avoid lock-in.

Bolt.new (Vercel): Best for front-end prototypes that need live hosting now. It generates working Next.js/Tailwind UIs quickly; constrain it with design tokens and component libraries to keep output on-brand.

If a tool cannot show you exactly what it changed and why—preferably as a minimal diff with a rationale—it does not belong in your daily driver stack.

Pros of AI Coding Tools

The payoff is not just speed; it is the compounding of correctness. Assistants who write tests first, propose minimal diffs, and cite docs reduce the rework tax you usually pay after the first “it runs” moment. The leverage shows up across the lifecycle, not just at the keyboard.

Less time on glue code: CRUD, schema plumbing, error handling, and scaffolds become near-instant.

Faster codebase comprehension: semantic search plus chat collapses hours of spelunking into minutes.

Tighter feedback loops: on-demand explanations, quick test generation, and inline refactors shrink “spec to commit.”

Knowledge transfer: junior devs climb steep stacks faster with grounded answers in your own repo.

Accessibility of best practices: linters, patterns, and perf hints surface proactively.

The best ROI comes when you constrain the tool with tests, types, and style guides so its speed compounds into reliability.

Cons of AI Coding Tools

Every acceleration has a skid risk. These tools are bumpers, not rails, and the most dangerous failures are subtle: off-by-one API misunderstandings, stale mental models of your data, or quiet security regressions hidden in helpful scaffolds. Senior engineers feel the pain because they guard the invariants.

Hallucinated APIs or flags: confident nonsense that compiles but fails at runtime or in edge cases.

Context illusions: large windows still miss the one file that matters; tools overfit to recent edits.

Diff bloat: multi-file changes that look helpful but tangle review and rollback.

Security drift: permissive CORS, weak input validation, or logging secrets slipped in by “helpful” code.

Cost opacity: chatty agents that run tests repeatedly can spike spend without an obvious benefit.

Reduce risk by enforcing small diffs, pre-commit checks, repo-level policies, and explicit human gates for schema, auth, and infra changes.

Future of AI Coding Tools: The IDE becomes an orchestrator, not an editor

We are moving from assistants that type to systems that prove. Expect editors to orchestrate models, tools, and tests as first-class citizens. The killer feature will not be a smarter autocomplete; it will be a tighter contract between intent and verifiable outcomes.

First-class test-driven agents: agents that start with failing tests and refuse to merge until they pass.

Code graph grounding: models reason over call graphs and data flow, not just tokens, to avoid drift.

On-device and local-first: privacy-friendly, fast feedback with local LLMs for 80% of edits, cloud for the hard 20%.

Policy-aware coding: security, compliance, and style rules enforced during generation, not after.

PR-quality automation: structured rationales, risk diffs, and impact analysis attached to every change.

The winning tools will make “prove it” as fast as “type it.”

Conclusion

Your stack does not need to be fashionable; it needs to be compounding. Pick one editor-native assistant for daily flow and one search-or-agent layer for repo-scale reasoning, then wire both to your tests and policies. Everything else is optional.

Depth beats breadth: master a tight toolchain, measure verified commits, and let the rest of the hype pass you by.